Core Features

Everything you need to build AI-powered applications

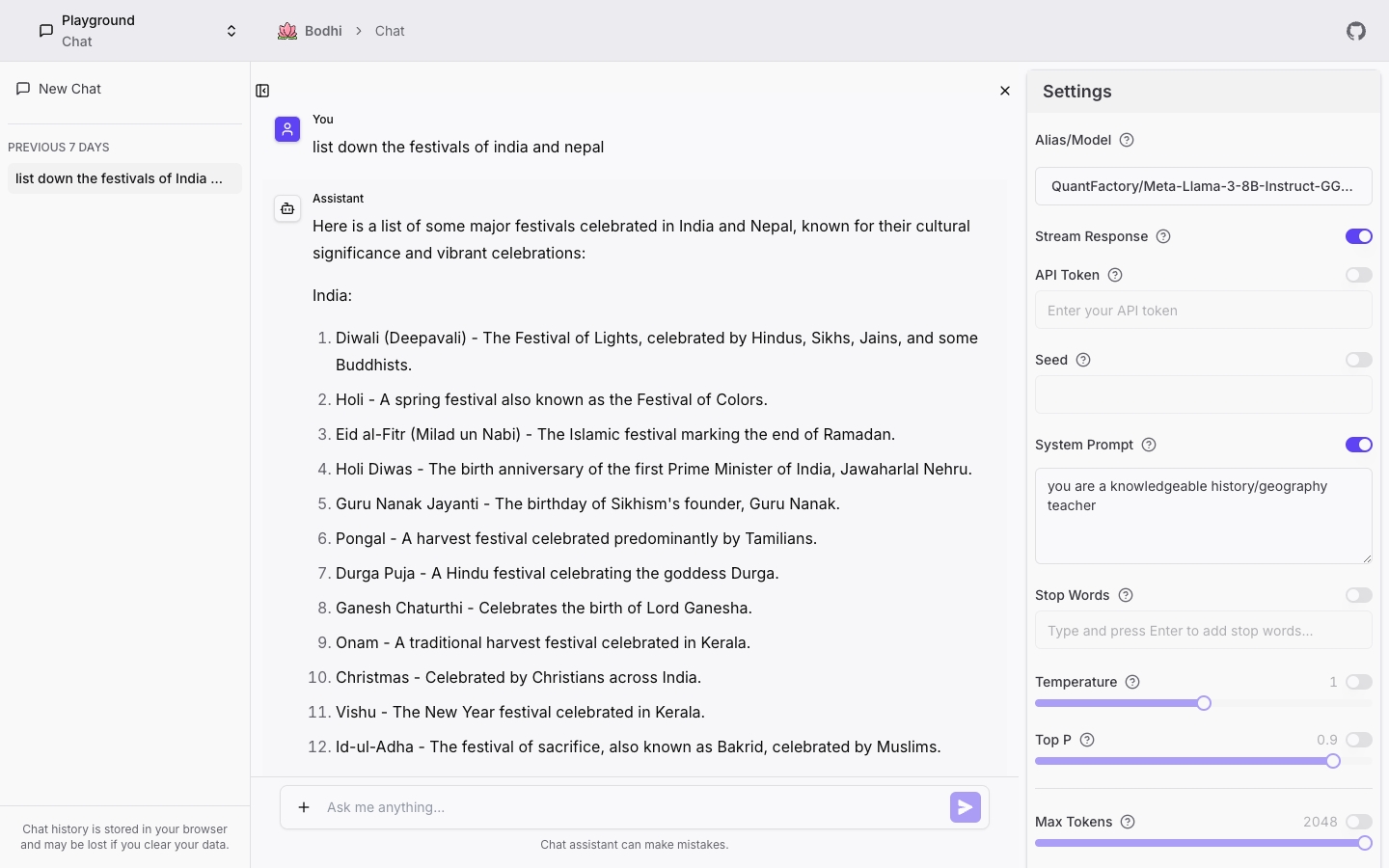

User Experience

Use local GGUF models alongside cloud API providers and MCP tools in one unified interface.

Server-Sent Events provide instant response feedback with live token streaming.

12+ parameters for fine-tuning: temperature, top-p, frequency penalty, and more.

Connect to MCP servers, discover and execute tools directly from the chat interface.

Technical Capabilities

Optimized inference with llama.cpp. 8-12x speedup with GPU acceleration (CUDA, ROCm).

Download models asynchronously with progress tracking and auto-resumption.

Enterprise & Team Ready

Built for secure collaboration with enterprise-grade authentication and comprehensive user management

Comprehensive admin interface for managing users, roles, and access requests.

4 role levels (User, PowerUser, Manager, Admin) with granular permission management.

Self-service access requests with admin approval gates and audit trail.

Enterprise-grade authentication with PKCE, session management, and token lifecycle control.

Secure team collaboration with session invalidation and role change enforcement.

Review and approve third-party app access with resource-level consent controls.

Developer Tools & SDKs

Everything developers need to integrate AI into applications with production-ready tools

React hooks and components via @bodhiapp/bodhi-js-react for seamless AI integration.

Scope-based permissions with SHA-256 hashing and database-backed security.

Interactive API documentation with auto-generated specs and live testing.

Drop-in replacement for OpenAI APIs - use existing libraries and tools seamlessly.

Resource consent API for third-party apps with granular permission scoping.

Flexible Deployment Options

Deploy anywhere - from desktop to cloud, with hardware-optimized variants for maximum performance

Native desktop apps for Windows, macOS (Intel/ARM), and Linux with Tauri.

7 optimized images: CPU (AMD64/ARM64), CUDA, ROCm, Vulkan, MUSA, Intel, and CANN.

RunPod auto-configuration and support for any Docker-compatible cloud platform.

8-12x speedup with GPU support for NVIDIA (CUDA), AMD (ROCm), Intel, Moore Threads (MUSA), and Huawei Ascend (CANN).

Health checks, monitoring, log management, and automatic database migrations.

Download for your platform

Choose your operating system to download BodhiApp. All platforms support running LLMs locally with full privacy.

Package Managers

brew install BodhiSearch/apps/bodhi